Background, theory & explanation

The approach to the 'Emissions-Temp Model’ is compared to 'The Cardinal Model'.

The theoretical basis undelying the models is reviewed leading to discussions of

- Equations used in climate models and the 'Emissions-Temp Model’

- Sophistication of the 'Simple Model'

HOW DOES ‘THE EMISSIONS-TEMP MODEL’ COMPARE TO THE CARDINAL MODEL?

The Cardinal Model links the observed total greenhouse effect to atmospheric levels of greenhouse gases. Using empirical parameter modelling and the well-known statistical technique of variance minimisation, the value of the Cardinal Model’s empirical parameters fit so well to the observed greenhouse effect that the variance (a statistical measure of how far a set of data points are spread out from their mean) is reduced by 99.99%.

Putting this into perspective: the observed total greenhouse effect for the period studied exhibited a standard deviation of 3.29 WM-2 (≡ +/- 0.7⁰C)

The deviation between the Cardinal Model calculated values and the observed values is less than 0.03WM-2 (≡ +/- 0.006⁰C) for each of the 447 month’s data.

It is this near-perfect fit that provides confidence that the Cardinal Model is an accurate representation of what is going on with the greenhouse effect (see the pages ‘The Greenhouse Effect’ for a fuller description of the process).

That variations in the total greenhouse effect are associated with the temperature at the Earth’s surface is undeniable. However, the directness and timing of the modifying effect is unclear.

Causality is popularly assumed to work like this:- emissions elevate greenhouse gas concentration which in turn cause more of the upcoming surface heat radiation to be absorbed (greenhouse effect) - heating the atmosphere.

This causes Global Warming and the elevation of surface temperatures which, in turn, causes Climate Change, Climate Breakdown and ultimately Climate Armageddon.

There is a possibility (for which good evidence exists) that the direction of causation is elevated surface temperatures cause greenhouse gas concentrations to increase.

Association, even strong correlation, can only imply causation but remains silent on the direction of causation.

An important point to make is that this empirical parameter study reveals 'interesting' relationships of association.

The use, in climate studies explanations of the term radiative forcing popularises that the direction of causation is as asserted by the researchers. If causation is asserted when the study is initiated, any association found is, without thinking, 'proved' to occur in the asserted direction.

The ‘Emissions-Temp Model’ attempts to explain variation in anomalous surface temperature (standard deviation 0.4⁰C ≡+/- 1.9WM-2) by empirical parameter models linked to atmospheric levels of greenhouse gases.

These are links of association and correlation - not causation.

I’m not suggesting that greenhouse gases have nothing to do with rising global temperatures but to assert direct causation is groundless.

My analysis starts out by looking at the question ‘can any greenhouse gas, or combination of gases be associated with all observed surface temperature variations?’

The answer is ‘no . . but . . .’

The ‘but’ refers to the extent of variance reduction.

A perfect-fit model would explain 100% of the variance in the modelled observation – as ‘The Cardinal Model’ does.

If we take the level of reduction of variance explained by the model to be a measure of 'fit', then for the better cases studied the answer becomes ‘up to 90%’.

This might be described as 'quite a good fit'.

Other factors certainly impact global average surface temperatures, but only relationships between variations in greenhouse gas concentrations and temperature variations are considered in this study.

However the ‘residuals’ from the ‘Emissions-Temp Model’ cases i.e those variations that cannot be associated with greenhouse gas levels - represent a minimal level of attribution to other factors.

These residuals are interesting as they highlight warming and cooling periods that are not, and cannot, be associated (or caused) by humans burning fossil fuels.

IS THERE A THEORETICAL BASIS FOR LINKING THE GREENHOUSE EFFECT TO VARIATIONS IN TEMPERATURE?

As explained in the the Greenhouse Effect it is a near instantaneous process (to human observers)

This instantaneous radiative process produces tiny increments of temperature in the atmosphere. There are constant variations in the magnitude of these moment-to-moment tiny increments.

Given any warm patch of the Earth’s surface (those warmer than than minus 50⁰C) radiating heat upwards, greenhouse gases not only absorb some of this heat radiation but also aids transmission of it through to the top of the atmosphere and out into space.

This cooling effect is particularly noticeable at night when skies are clear - as anyone who has experience a freezing night while in a ‘hot’ desert.

Clouds confound this effect by reflecting radiation from the sun back into space so reducing daytime warming.

At night (and also during the day) clouds modify the so-called greenhouse effect in complex ways. A simple explanation of the impact of clouds is included in 'The Greenhouse Effect'.

Over longer periods of time (seconds, minutes, hours, days, months and years), thermodynamic processes redistribute this greenhouse heating.

These processes include mass transport (wind, turbulence, ocean currents and other differential density-driven processes), heat conduction (assisted by convection) and occasionally bolstered by radiative processes.

Latent heat processes (principally evaporation, condensation, melting and freezing) are responsible for significant amounts of warming and cooling.

Through these thermodynamic processes, the surface of the Earth – the ground and ocean surface - and the Lower Troposphere (the bottom layer of the atmosphere) warms and cools.

There is a degree of latency involved, so we observationally tend to focus on averages.

The temperature at the surface of the Earth varies hugely, not only diurnally but over longer periods. These variations also have a geographic dimension (local, regional and the chaging nature of the surface of the land and ocean).

Satellite measurements are averaged and presented as daily, monthly and annual averages.

Weather forecasters model the thermodynamic processes. Numerical weather prediction is a chaotic initial value problem, where the accuracy of a forecast is critically dependent on how accurately the current state (initial value) of the atmosphere is defined.

Long-term (greater than weeks ahead) weather, or climate, forecasts are impossible to make reliably – sometimes called ‘the butterfly-effect’.

Nevertheless, climate modellers often forecast doom and gloom such as runaway global warming causing climate breakdown.

But how do they do this?

Having agreed that initial value forecasting is impossibly inaccurate for the longer term, they fall back on predictive, so-called fundamental, relationships around which they build their thermodynamically-oriented relationships.

Two of these ‘fundamental’ inputs are radiation (a quantum process) driven and not thermodynamically-driven.

The first of these quantum-driven effects is the warming from incoming radiation from the sun. During the day the sun’s radiation, at least that portion that is not reflected into the cold of space warms the surface of the Earth.

By day and by night, the warm surface of the Earth radiates heat (infrared radiation) out into space. This outward radiation is partially reduced by absorption that is referred to as ‘the greenhouse effect’.

Without this greenhouse effect, the surface of the Earth would be about 33-34⁰C cooler than it is.

That the increased concentrations of atmospheric greenhouse gas increase heat absorption is undeniable.

But which gases and by how much?

THE EQUATIONS OF CLIMATE MODELLERS

Climate modellers build in an enhanced greenhouse gas absorption temperature relationship in their models.

The axiomatic inclusion of these relationships based on the assertion that 'Anthropogenic emissions cause Global Warming' means that their models can only conclude the assertion is true.

My models (the Cardinal Model and the Emissions-Temp Model) prove, to my satisfaction, that these relationships are wrong and mislead modellers to universally conclude that Anthropogenic emissions cause Global Warming and Climate Change.

The modellers usually express the 'greenhouse gas - warming relationship' as a 'forcing' Arrhenius (logarithmic-form) relationship.

For CO2 they predict that the warming effect of the 1850 level of CO2 is about +6.39⁰C (~19% of the 33⁰C mentioned above) and from 1850 to 2025 the increase (to~420ppm) adds, directly, a further 0.47⁰C.

This is about one third of the observed +1.39⁰C.

The climate modellers introduce further equations to explain this underwhelming 'theoretical' result.

They refer to these as ‘feedbacks’ which lead to the direct warming (+0.47⁰C) being multiplied by a factor of three.

These theoretically unsound ‘sensitivity’ equations are a mix of quantum and thermodynamically driven relationships.

Most involve interaction with water which is asserted to be 'sensitve' to increases in CO2.

Water is generally recognised as the most important greenhouse gas either through enhanced absorption of heat by humidity-enhanced air or variation in clouds which either trap more heat (increased cloud cover) or allow more of the sun’s rays through (reduced cloud cover).

There is little evidence other than selected circumstantial observations to back up the use of any of these ‘climate-warming’ equations.

The form and magnitude of these climate equations are born of the CO2 obsessed dogma of the Climate Change Anointed.

They have no proven (by observation or experiment) scientific basis.

Just saying 'It's got hotter and CO2 has increased so CO2 must take the blame' is an emotional belief-driven reaction and proper scientists should be ashamed to go along with it.

President Obama's famous (and egregiously misleading) 2013 tweet “Ninety-seven percent of scientists agree: Climate Change is real, man-made and dangerous” wrongly implied that 'the science is settled' - at least according to the consensus 'scientists'.

However 'consensus' is a political concept and has no place in science.

The science is not settled.

My contribution to the debate is to argue that using sound models built on the scientific method uncontaminated with obsessive anthropocentric dogma indicate very little warming results from increasing CO2 (& other gases) in the atmosphere.

Aims of the ‘The Emissions-Temp Model’

The analysis discussed here is my attempt to link increased levels of atmospheric greenhouse gases to increased surface temperature.

In this analysis, I have tried to seek out if any empirical relationships can be found that are statistically significant.

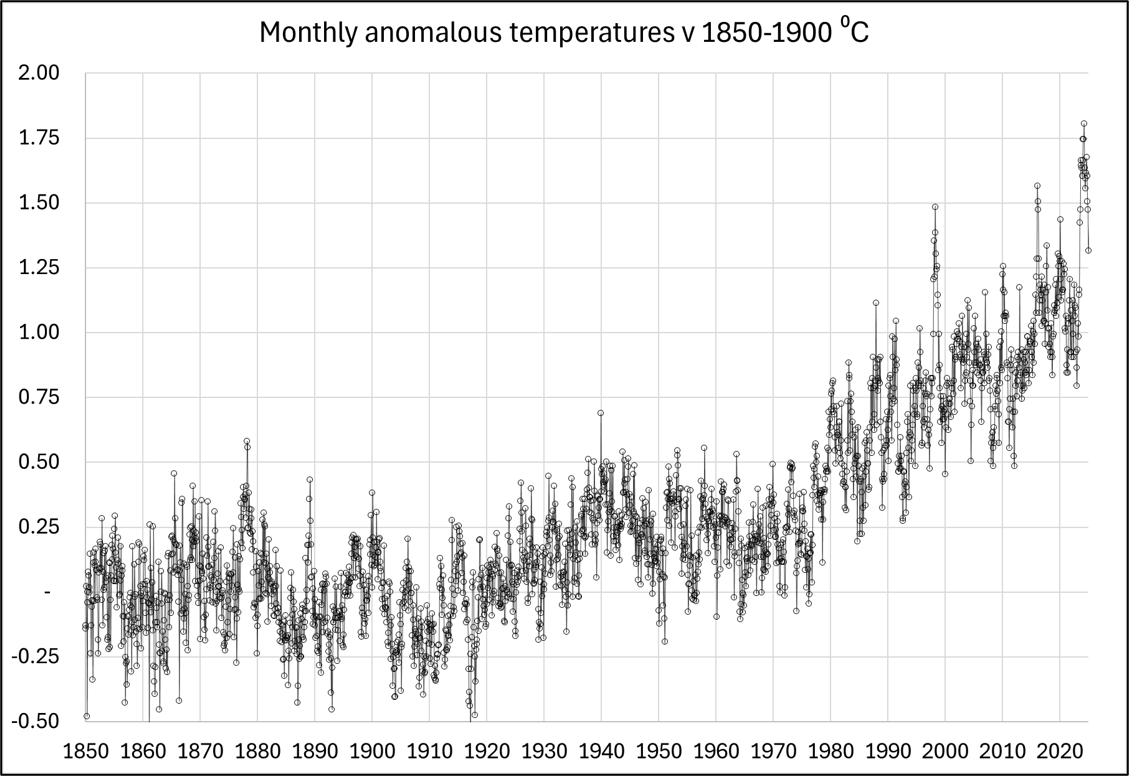

My investigation is based on monthly global average measurements.

The period of the analysis is from 1850 to 2025 – a period made up of 2,100 months.

Earlier temperature data are from the Berkeley Earth temperature dataset (high-resolution land and ocean time series data and gridded temperature data). Their global datasets begins in 1850.

From the end of 1979, satellite temperature measurements have been used.

One further complication is that the monthly temperatures are reported as anomalous temperature. This means that for any reported temperature, the warming or cooling is reported as the difference to that month’s average over many years.

Satellite temperatures are reported against 1991 to 2020 averages (the WMO 'standard').

The whole of the dataset I use has been re-based to a ‘base period’ 1850-1900 average of zero.

As a measure of variability in temperatures, it may be noted that the standard deviation of the anomalous temperature dataset is 0.4⁰C.

Anomalous temperatures are generally reported because they offer clarity for long-term trends.

The standard deviation of absolute monthly average temperatures is 0.9⁰C (+/- 4.2 WM-2) – over double the variation in anomalous temperatures.

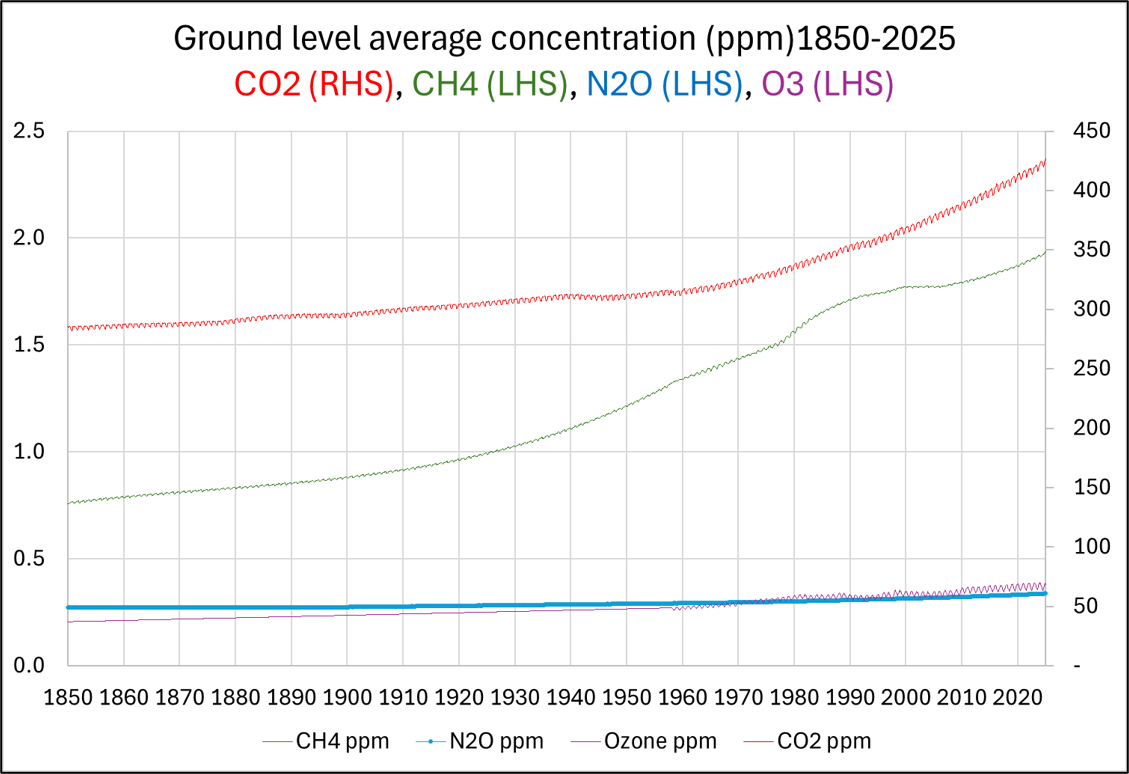

The study objective is to investigate if there is an empirical correlation (and the form of that correlation) between these Global monthly average surface temperatures and the monthly average level of different greenhouse gases: water, carbon dioxide (CO2), Methane (CH4), Nitrous oxide (N2O) and Ozone (O3).

Mirco-pollutants resulting from human activities were investigated by seeing if a correlation exists between surface temperature and global population.

For the 1850-2025 period data was collected for each greenhouse gas, other than water.

Of all these gases, CO2 is the most concentrated and evenly distributed. It persists in the atmosphere for a long time, so the global concentration is almost the same everywhere – geographically and altitudinally.

CH4 is widely distributed but being more reactive, it varies significantly both geographically and altitudinally. N2O and O3 are even less homogeneously spread.

Best efforts were taken to represent, in the data, the monthly average global concentrations.

Water is more complicated. In its vapour form it is a radiation absorber with average concentration iof ~3,800 ppm (about 10 times greater than CO2).

In the form of clouds it presents a near insuperable deterrent for theoretical modelling.

THE TWO BASIC FORMS OF RELATIONSHIP

A correlation-relationship between surface temperature and greenhouse gas concentration has been offered by theoreticians.

This was first proposed by Arrhenius (~1900) and subsequently endorsed by other researchers.

In their 2013 study, Wenyi Zhong and Joanna D. Haigh quantified the relative contributions of various gases to the Earth's natural greenhouse effect and explored how this change as concentrations increases.

They concluded that water vapour remains the most significant greenhouse gas, contributing roughly 60% to the total effect under clear-sky conditions.

Carbon dioxide, they asserted, is the second most important gas, accounting for approximately 26% of the natural greenhouse effect.

Ozone contributes roughly 8% and other gases Methane and Nitrous Oxide together account for the remaining 6%.

Zhong and Haigh specifically addressed the ‘saturation’ of CO2 and demonstrated - theoretically - that while the centres of absorption bands become saturated, the warming effect (radiative forcing) continues to increase roughly logarithmically with concentration.

Their logarithmic relationship mirrored the original contention by Arrhenius.

The discussion around the concept of saturation is explored in the pages ‘The Greenhouse Effect’.

Not everyone agrees with the interpretation where the contribution to the total greenhouse effect by the heat absorbed by CO2 increases as the logarithm of the concentration of CO2 which, in turn, directly increases the temperature at the Earth’s surface.

Where saturation processes are at work, a more appropriate mathematical relationship may be at play.

This is the hyperbolic tangent [tanh] relationship.

At low concentrations, the greenhouse effect rises steeply – as the logarithmic relationship also suggests.

As more and more absorption bands reach initial saturation, and the bands ‘broaden’, the absorption response curve begins to flatten out.

At extremely high concentration the [tanh] curve asymptotically approaches a limit.

The main CO2 absorption bands become saturated at levels as low as ~5ppm which is a theoretical calculation based on the absorption cross-section curve for CO2 (see further the pages ‘The Greenhouse Effect’).

The argument for a hyperbolic tangent [tanh] relationship, is that by the time CO2 concentrations approach the 1850 levels of over 250ppm, the response curve is already flattening out.

The hyperbolic tangent function describes many natural processes involving saturation, equilibrium or approaching limits.

It models, among other things, in Special Relativity: velocity addition, in Statistical Mechanics: how individual atomic spins align with an external magnetic field, showing how magnetization saturates as the field strength increases.

In Neural Signal Processing: it mimics how a neuron remains dormant until a certain threshold is reached, then fires and eventually saturates at a maximum output; and in Fluid Dynamics: It describes the velocity profile of certain types of Laminar Flow and the shape of specific waves (solitons) where internal friction and dispersion balance out.

Although there are srious fundamental theoretical arguments for a hyperbolic tangent [tanh] relationship, this study uses observational empirical correlation to show the [tanh] relationship fits much better than the Arrhenius [ln] relationship.

THERE ARE TWO ELEMENTS OF CONTRIBUTION

My investigations began by looking at three greenhouse gases as though they might be solely responsible for variations in surface temperature since 1850.

These three were CO2, H2O and a grouping of ‘Others’ made up of CH4, N2O, O3 and trace pollutants such as SF6.

For each of these three studies both the Arrhenius [ln] and the [tanh] form were investigated on the basis that an empirical relationship might exist to link the concentration of any particular gas to the observed global (anomalous) temperatures on a month-by-month average basis.

The values of the empirical parameters charactering each of these two relationships were determined by minimising the variance between the temperatures calculated by the models and the observed temperatures.

The first model investigated was:

The Simple Model:

where observed temperature variations might be explained by a single equation form. The single-form-relationships that were tested were Arrhenius-form [ln] and hyperbolic tangent [tanh]-form.

The Simple Model did not work very well. It gave poor to very poor fits to observed temperatures. Therefore, a second model was investigated.

The Second Model:

where contributions from greenhouse gases were split into two distinct causal categories.

The first contribution relates to the effect of altitude temperature profile on the greenhouse effect as it would be at 1850 concentration levels.

This altitude temperature profile effect (on existing gas levels) contribution of the Second Model is explained in the pages ‘The Greenhouse Effect’. It relates to the effect of the temperature profile (raised to the power 4 per Wien’s law) on the heat absorbed, transmitted and eventually radiated to space by any given concentration of gas.

The second contribution related to the increase in concentration since 1850 – as the Simple Model tried but failed to validate a significant correlation. .

The Analysis:

The starting point for the analysis was to see if a simple linear relationship could explain the variation in observed temperature. 65% of the variance is explained this simple linear relationship.

The Simple Model improved this variance reduction from 65% to above 73%.

At this level of variance reduction, it indicates that some correlation might exists, but little more than might be expected through pure coincidence.

The coincidence of “Temperatures have gone up – oh, and by-the-way, we’ve noticed CO2 has also risen” influenced Hansen, Brolin & others to exhort a causal relationship between CO2 and surface temperature in the 1970s.

The poor correlation found using the Simple Model was poorer for the Arrhenius [ln] form than for the [tanh] form.

Although this not ‘good’ statistical evidence it indicates some doubt that the Arrhenius relationship may not be the ‘scientifically proved theoretical relationship’ it is made out to be.

By applying the Second Model, the variance reduction level moved up significantly to a much more ‘interesting’ 90%.

There was such a marked improvement for the [tanh] form compared to the Arrhenius [ln] form further strengthening the statistical evidence in favour of the [tanh]-model.

The Arrhenius [ln] form gave such conflicting results for CO2 (and 'Others') that the investigation proceeded solely on the [tanh]-form basis.

Even a 90% explanation of variance does not prove a causal relationship exists, but it allows the following question to be answered:

“If we assume that CO2 and other gases enhance the greenhouse effect which, in turn, is associated with an increase in average surface temperature - can we isolate how much of this contribution is associated with increasing concentrations of greenhouse gases and how much is due to the levels of greenhouse gases existing in 1850?”

The IPCC assert that nearly all Global Warming (& hence Climate Change) is caused by anthropogenic emissions – principally CO2.

This not an ‘associated with’ message but a clear assertion of causality.

The ‘Emissions-Temp Model’ analysis presented here assesses how associated are temperature and greenhouse gas levels.

Although there are strong indications that elevated greenhouse gas levels may have a link to tiny rises in global average temperature there is no proof that even this tiny association is in anyway causal.

Similar results found linking temperatures to only CO2 were found if the link was to only water or only 'Others'.

These investigations showed that a major reduction in variance could be explained by association with each of CO2, the ‘Others’ and water.

Not one of these could be selected as ‘the dominant relationship’ so a mixed model was investigated.

As an end-note to this analysis it must be pointed out the analysis coming from ‘The Emissions-Temp Model’ it is not as clear-cut as the Cardinal Model.

The Cardinal Model investigate the relationship of greenhouse gases to the greenhouse effect seeking to add ‘fine structure’ to a theoritically proposed causal-relationship. It explains 99.99% of the observation variance.

However, for ‘The Emissions-Temp Model’ not only are global average temperatures associated with variations in the greenhouse effect, but other factors are definitely at play, not least of which is the effect of the sun’s heating rays.